This thread has been requested before, and I’m finally setting it up now, very late, apologies for that! This thread is for general discussion about FSD and its development. You can ask for modifications to the title and the opening post (Masters can also modify the title themselves if they wish) ![]()

@JukkaM, @DayTraderXL, @Mark_Renton

“In the next three to five years I don’t see any technical possibility of bringing a system onto the market that is only camera-based.”

OK Fair enough, Johann Jungwirth is not just a manager-level guy, but he stated this at CES a week ago. In the New York video you linked, MobileEye indeed demonstrates its camera-based subsystem, but

-

Youtube is full of Tesla FSD videos, but has Tesla still solved L4 with FSD?

-

MobileEye tests camera-only and lidar/radar-only systems separately, but in its L4 system, it combines the systems: “In our level 4 test vehicles both are fused to achieve higher mean time between failures and more scalable validation process.”

-

The system used by the New York test car “is almost identical to our supervision product.” Supervision is L2+. MobileEye’s website says: it still requires human oversight.

-

The New York video also uses AV maps.

In summary: MobileEye’s L4 requires both lidar/radar and cameras. L2+ is camera-only.

Besides, you’re approaching the issue quite pointedly when you say that camera-based L4 has been solved. A sufficient level for test use has been reached, but production use could be a surprisingly tight squeeze. I’m sure MobileEye’s Johann Jungwirth considered his words carefully for CES.

It does not refer to L5 but explains why traditional lane assist systems only work for half of the kilometers driven. Mobileye’s “Lane departure warning system” on the other hand is better because it uses AV maps.

Lane guidance is based on previously collected data from the line that other vehicles were driving on this road.

L4 is, moreover, a very flexible definition. An excerpt from the Wiki definition of L4.

self-driving is supported only in limited spatial areas (geofenced) or under special circumstances.

A geofence can be a very narrow area, within which lane markings are perfectly maintained and the area has precise HD maps. And it’s enough for the system to work in dry weather during the day.

I personally believe that the “big leap” will be missing. Instead, development will be incremental. First, AV (Autonomous Vehicle) trucks will become more common, driving goods from factory to port or within a factory area. Or delivery vans on restricted routes. In addition, vehicles will be monitored from cabins.

For passenger cars, I believe in the German approach. Before L4 robotaxis, we will see traffic jam assistants, meaning L3 will first come for traffic jam driving on highways. Robotaxis in capital cities are mainly marketing material.

Tesla FSD Beta 10.9 Out

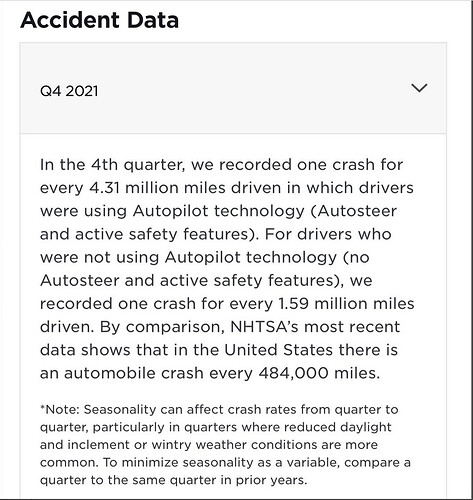

Tesla has released its 4Q 2021 accident report, so the distribution of “Tesla FSD doesn’t work” spam could be stopped. They have no factual value in the discussion.

Well, it still doesn’t work; that data proves nothing else. Also, it’s Autopilot, not FSD.

It’s worth looking beyond the so-called statistics; I believe there’s been a lot of discussion about this in the Tesla thread.

https://www.tesla.com/VehicleSafetyReport

It’s not a report, it’s an advertisement. If the numbers are reported at such a general level, they tell nothing. You can only compare three numbers: Tesla with Autopilot, without Autopilot, and the national average.

we recorded one crash for every 4.31 million miles driven in which drivers were using Autopilot technology (Autosteer and active safety features). For drivers who were not using Autopilot technology (no Autosteer and active safety features), we recorded one crash for every 1.59 million miles driven. By comparison, NHTSA’s most recent data shows that in the United States there is an automobile crash every 484,000 miles.

-

Where does Tesla get its own number: i.e., how does Tesla know that a car is involved in an accident and how does it know if Autopilot was on?

-

Autopilot is mainly used on highways, where crash/miles driven is naturally low.

-

Without Autopilot, Tesla vehicles have one-third fewer accidents compared to the national average. Is this thanks to the car or the driver? (Note: Autopilot and active safety measures disabled) I think we should congratulate Tesla drivers more than Tesla here. In Finland, six percent of vehicles had defects that contributed to the accident. The driver’s condition, such as alcohol, illness, fatigue, or mental state, was involved in a total of 69 percent of accidents.

To everyone claiming FSD has had no accidents, here’s an example:

For some reason, Tesla got the video removed from YouTube, but the video and an explanation of what happened are there.

FSD wanted to go straight into oncoming traffic on a curve; the driver had to use so much force to disengage FSD that the counter-throw sent the car into the bushes. This goes into Tesla’s “user error” category for this reason, even though everyone knows the fault was with FSD.

And everyone who has watched FSD videos knows that there are hundreds/thousands of videos where FSD would have crashed into a wall/gone off-road/hit cars/people if the driver hadn’t intervened in time.

Since some discussants believe that Tesla FSD Beta doesn’t work, here’s 70 minutes of Tesla FSD Beta 10.9 driving without driver intervention.

Looks quite reasonable. The driver seems a bit scared, as their hands keep twitching towards the steering wheel. The environment is also quite easy. But promising nonetheless. A robotaxi for the Finnish winter in 2050?

https://twitter.com/SCMPNews/status/1483451279288455169?t=uaxAX9CT6KdmKRutFdvlmw&s=19

Experts, what do you think about the Chinese claims?

200 robotaxis in commercial traffic in Beijing. They use V2X and 5G networks and operate within a geofence.

This bites a bit into the credibility of Tesla’s lead on the topic:

This presentation gives a good overview of the required sensors and the number of cameras:

Except, around the 5:30 mark of the video, it needs correction, as the car stubbornly wants to drive in the outermost lane even though there are parked cars there.

Now 10.10 is already out, but it’s apparently worse than 10.9 in some cases. For example: https://www.youtube.com/watch?v=VLqAwp3MUHw

This is interesting because once we start getting videos from regular users, we’ll be able to compare the performance between different operators.

This would be an interesting test intersection for all self-driving car companies. Tesla has managed it several times, even though the car clearly hasn’t been taught to use the center median. I’m sure they’ll figure it out at some point…

It looks like in version 10.9, this same intersection went a lot better (the first attempt in the video was a big mistake). Following FSD (Full Self-Driving) Beta, it feels like we sometimes take one step backward and then two steps forward. It’s admittedly a challenging intersection that requires extreme caution even from a human.

The company wants to keep the discussion related to autonomous driving in this thread…

“Car manufacturers” don’t test like Tesla because they don’t have the opportunity to test like Tesla. Tesla’s fleet is in the millions, while others’ are in the hundreds. Most likely, other systems are so expensive that a normal person can’t afford them.

FSD beta could be compared to a teenager driving with a learner’s permit and a parent teaching them; you always have to be ready to intervene and take control.

Here’s a recent video from an FSD beta tester who shares their opinion on the horrified reactions from naysayers regarding this topic:

https://youtu.be/Lmy5PxQGtwY